Account Balances

Account Balances

Data Contract Template

Data Contract Template

Ensure account balance data is fresh, complete, and reliable before it is used for financial reporting, reconciliation, liquidity monitoring, and regulatory compliance.

Fabiana Ferraz

Technical Writer at Soda

Data contract description

This data contract enforces schema stability, a parameterized freshness SLA on balance_date, and required identifiers and balance fields to ensure reliable point-in-time balance reporting. It blocks future-dated balances, prevents duplicate snapshots per account and date, flags negative closing balances, and verifies that each day’s opening_balance matches the previous day’s closing_balance within a configurable tolerance. These checks protect balance accuracy for downstream accounting, liquidity, and regulatory workflows.

account_balances_data_contract.yaml

datasetvariables: FRESHNESS_HOURS: default: 24 checks: - schema: allow_extra_columns: false allow_other_column_order: false - row_count: threshold: must_be_greater_than: 0 - freshness: column: balance_date threshold: unit: hour must_be_less_than_or_equal: ${var.FRESHNESS_HOURS} # Dataset integrity rules - failed_rows: name: "balance_date must not be in the future" qualifier: balance_date_not_future expression: balance_date > CURRENT_TIMESTAMP - failed_rows: name: "No duplicate account balance snapshots (account_id + balance_date)" qualifier: duplicate_account_snapshot query: | SELECT account_id, balance_date FROM account_balances GROUP BY account_id, balance_date HAVING COUNT(*) > 1 threshold: must_be: 0 - failed_rows: name: "Opening and closing balances must not be negative beyond allowed overdraft tolerance" qualifier: excessive_negative_balance expression

columns: - name: account_id data_type: string checks: - missing: name: No missing values - invalid: name: "account_id length guardrail" valid_min_length: 1 valid_max_length: 64 - name: balance_date data_type: date checks: - missing: name: No missing values - name: opening_balance data_type: decimal checks: - missing: name: No missing values - name: closing_balance data_type: decimal checks: - missing: name: No missing values - name: currency data_type: string checks: - missing: name: No missing values - invalid: name: "Currency must be ISO-4217 (3 uppercase letters)" valid_format: name: ISO-4217 code regex: "^[A-Z]{3}$"

Data contract description

This data contract enforces schema stability, a parameterized freshness SLA on balance_date, and required identifiers and balance fields to ensure reliable point-in-time balance reporting. It blocks future-dated balances, prevents duplicate snapshots per account and date, flags negative closing balances, and verifies that each day’s opening_balance matches the previous day’s closing_balance within a configurable tolerance. These checks protect balance accuracy for downstream accounting, liquidity, and regulatory workflows.

account_balances_data_contract.yaml

datasetvariables: FRESHNESS_HOURS: default: 24 checks: - schema: allow_extra_columns: false allow_other_column_order: false - row_count: threshold: must_be_greater_than: 0 - freshness: column: balance_date threshold: unit: hour must_be_less_than_or_equal: ${var.FRESHNESS_HOURS} # Dataset integrity rules - failed_rows: name: "balance_date must not be in the future" qualifier: balance_date_not_future expression: balance_date > CURRENT_TIMESTAMP - failed_rows: name: "No duplicate account balance snapshots (account_id + balance_date)" qualifier: duplicate_account_snapshot query: | SELECT account_id, balance_date FROM account_balances GROUP BY account_id, balance_date HAVING COUNT(*) > 1 threshold: must_be: 0 - failed_rows: name: "Opening and closing balances must not be negative beyond allowed overdraft tolerance" qualifier: excessive_negative_balance expression

columns: - name: account_id data_type: string checks: - missing: name: No missing values - invalid: name: "account_id length guardrail" valid_min_length: 1 valid_max_length: 64 - name: balance_date data_type: date checks: - missing: name: No missing values - name: opening_balance data_type: decimal checks: - missing: name: No missing values - name: closing_balance data_type: decimal checks: - missing: name: No missing values - name: currency data_type: string checks: - missing: name: No missing values - invalid: name: "Currency must be ISO-4217 (3 uppercase letters)" valid_format: name: ISO-4217 code regex: "^[A-Z]{3}$"

Data contract description

This data contract enforces schema stability, a parameterized freshness SLA on balance_date, and required identifiers and balance fields to ensure reliable point-in-time balance reporting. It blocks future-dated balances, prevents duplicate snapshots per account and date, flags negative closing balances, and verifies that each day’s opening_balance matches the previous day’s closing_balance within a configurable tolerance. These checks protect balance accuracy for downstream accounting, liquidity, and regulatory workflows.

account_balances_data_contract.yaml

datasetvariables: FRESHNESS_HOURS: default: 24 checks: - schema: allow_extra_columns: false allow_other_column_order: false - row_count: threshold: must_be_greater_than: 0 - freshness: column: balance_date threshold: unit: hour must_be_less_than_or_equal: ${var.FRESHNESS_HOURS} # Dataset integrity rules - failed_rows: name: "balance_date must not be in the future" qualifier: balance_date_not_future expression: balance_date > CURRENT_TIMESTAMP - failed_rows: name: "No duplicate account balance snapshots (account_id + balance_date)" qualifier: duplicate_account_snapshot query: | SELECT account_id, balance_date FROM account_balances GROUP BY account_id, balance_date HAVING COUNT(*) > 1 threshold: must_be: 0 - failed_rows: name: "Opening and closing balances must not be negative beyond allowed overdraft tolerance" qualifier: excessive_negative_balance expression

columns: - name: account_id data_type: string checks: - missing: name: No missing values - invalid: name: "account_id length guardrail" valid_min_length: 1 valid_max_length: 64 - name: balance_date data_type: date checks: - missing: name: No missing values - name: opening_balance data_type: decimal checks: - missing: name: No missing values - name: closing_balance data_type: decimal checks: - missing: name: No missing values - name: currency data_type: string checks: - missing: name: No missing values - invalid: name: "Currency must be ISO-4217 (3 uppercase letters)" valid_format: name: ISO-4217 code regex: "^[A-Z]{3}$"

How to Enforce Data Contracts with Soda

Embed data quality through data contracts at any point in your pipeline.

Embed data quality through data contracts at any point in your pipeline.

# pip install soda-{data source} for other data sources

# pip install soda-{data source} for other data sources

pip install soda-postgres

pip install soda-postgres

# verify the contract locally against a data source

# verify the contract locally against a data source

soda contract verify -c contract.yml -ds ds_config.yml

soda contract verify -c contract.yml -ds ds_config.yml

# publish and schedule the contract with Soda Cloud

# publish and schedule the contract with Soda Cloud

soda contract publish -c contract.yml -sc sc_config.yml

soda contract publish -c contract.yml -sc sc_config.yml

Check out the CLI documentation to learn more.

Check out the CLI documentation to learn more.

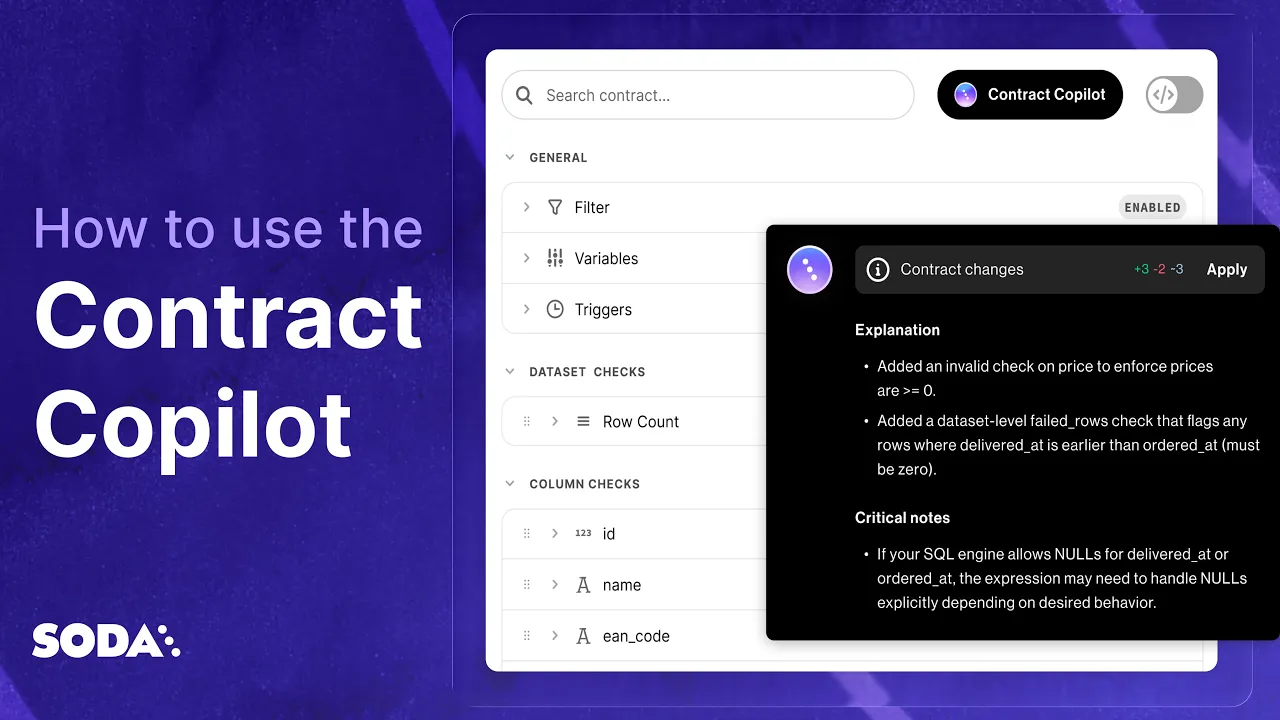

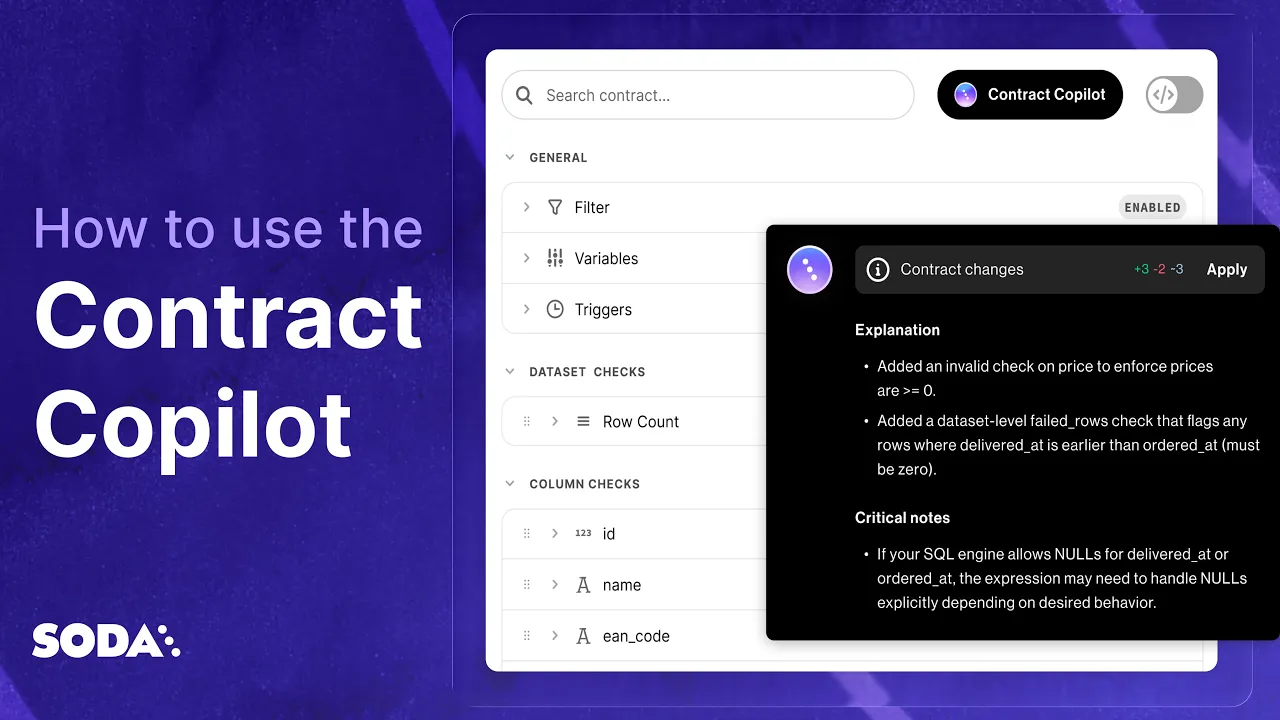

How to Automatically Create Data Contracts.

In one Click.

Automatically write and publish data contracts using Soda's AI-powered data contract copilot.

Make data contracts work in production

Business knows what good data looks like. Engineering knows how to deliver it at scale. Soda unites both, turning governance expectations into executable contracts.

Explore more data contract templates

One new data contract template every day, across industries and use cases

4.4 of 5

Your data has problems.

Now they fix themselves.

Automated data quality, remediation, and management.

One platform, agents that do the work, you approve.

Trusted by

4.4 of 5

Your data has problems.

Now they fix themselves.

Automated data quality, remediation, and management.

One platform, agents that do the work, you approve.

Trusted by

Solutions

4.4 of 5

Your data has problems.

Now they fix themselves.

Automated data quality, remediation, and management.

One platform, agents that do the work, you approve.

Trusted by

Solutions